Notice: Please cite the following paper if you are using TaintBench:

Luo, L., Pauck, F., Piskachev, G. et al. TaintBench: Automatic real-world malware benchmarking of Android taint analyses. Empir Software Eng 27, 16 (2022). https://doi.org/10.1007/s10664-021-10013-5

How does TaintBench compare to DroidBench?

List of Evaluated Benchmark Suites

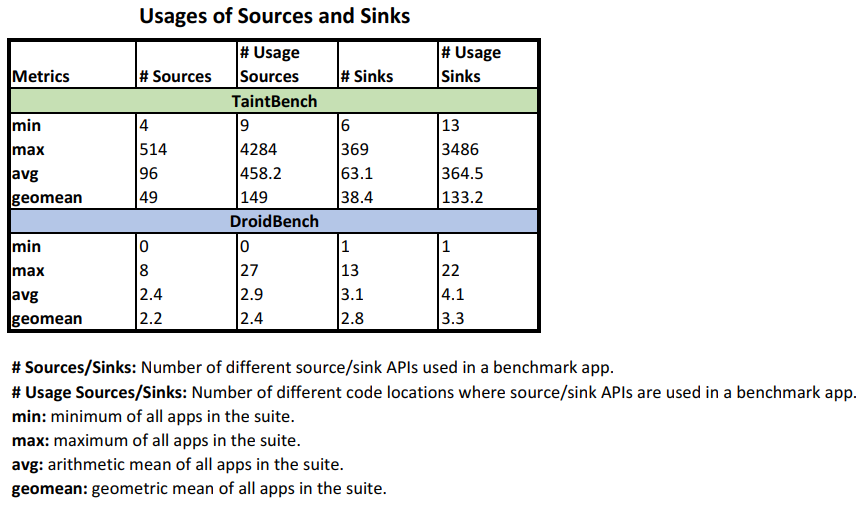

Usage of Sources and Sinks

- Lists of potential sources and sinks:

- Results:

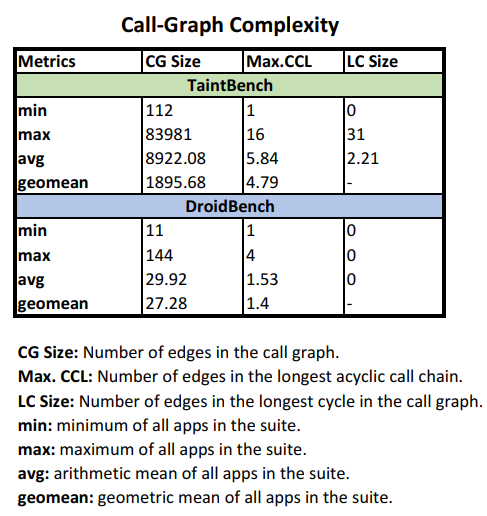

Call-Graph Complexity

- Results:

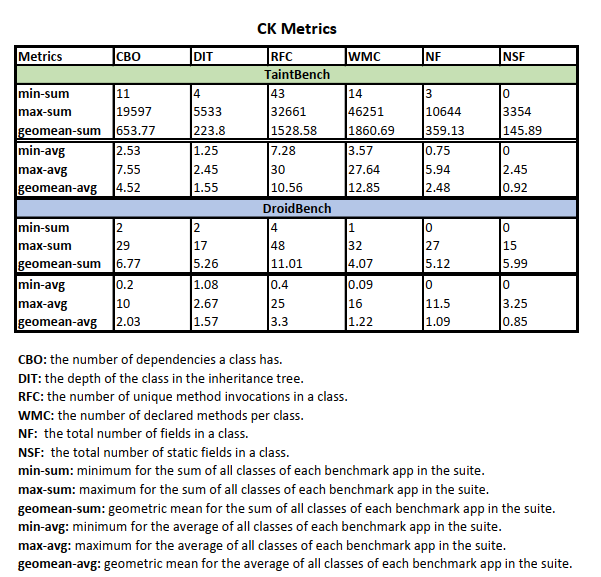

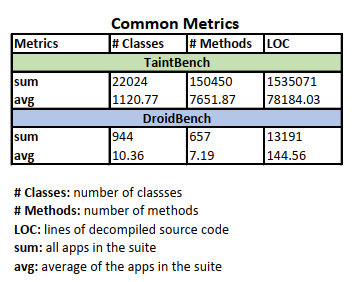

Code Complexity

- Results:

How effective are taint analysis tools on TaintBench compared to DroidBench?

List of Evaluated Tools

| Tool | Version | Source |

|---|---|---|

| Amandroid | November 2017 (3.1.2) | Link |

| Amandroid* | December 2018 (3.2.0) | Link |

| FlowDroid | April 2017 (Nightly) | Link |

| FlowDroid* | January 2019 (2.7.1) | Link |

Experiment 1 (Default)

Configuration: All tools are executed in their default configuration. Sources and sinks configured for the tools can be found below:

Experiment 2 (Suite-level)

Configuration: All tools are configured with sources and sinks defined in benchmark suite. Sources and sinks of the benchmark suites configured for the tools can be found below:

Experiment 3 (App-level)

Configuration: For each benchmark app, a list of sources and sinks defined in this app is used to configure all tools. Each tool analyzes each benchmark app with the associated list of sources and sinks.

Experiment 4 (Case-level)

Configuration: For each benchmark case (taint flow), only the source and sink defined in this case is used to configure all tools.

Assume a benchmark app contains N benchmark cases, each tool analyzes the benchmark app N times. Each time the tools are configured with the associated source and sink for the respective benchmark case.

Experiment 5 (Minified App)

For each positive benchmark case, a minified Apk is generated by the MinApkGenerator.

Experiment 6 (Delta App)

For each positive benchmark case, a delta Apk is generated by the DeltaApkGenerator.